Final Dataset Verification for 797802, 6946290525, 911787612, 693125885, 648971009, 20784200

Final dataset verification for identifiers such as 797802, 6946290525, and others is a critical process in ensuring data integrity. Each identifier must undergo thorough format checks and checksum validations. Furthermore, cross-referencing with authoritative databases is necessary to establish legitimacy. The implications of inaccuracies can be significant, impacting decision-making processes. Understanding the techniques and best practices for maintaining data quality is essential for stakeholders. The next steps in this verification process could reveal crucial insights.

Importance of Dataset Verification

Although dataset verification may initially appear as a procedural formality, its significance cannot be overstated in ensuring the integrity and reliability of data-driven outcomes.

Effective verification processes are crucial for identifying inaccuracies, inconsistencies, and anomalies within datasets.

Techniques for Validating Identifiers

Ensuring the integrity of identifiers within datasets is a fundamental aspect of the overall verification process.

Effective identifier checks utilize various validation methods, including format validation, checksum algorithms, and cross-referencing with authoritative sources.

These techniques enhance the reliability of data by confirming that identifiers are accurate and correspond to legitimate entities, thereby supporting informed decision-making and fostering trust in dataset integrity.

Implications of Data Inaccuracies

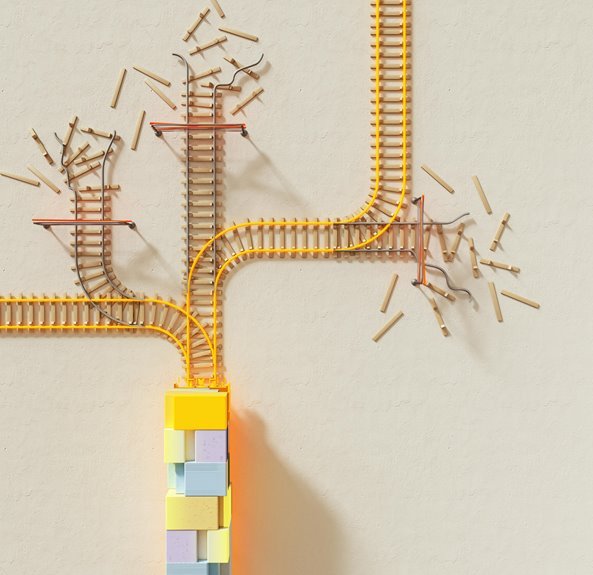

Data inaccuracies can significantly undermine the credibility and utility of datasets, leading to misguided decisions and erroneous conclusions.

Such data errors can complicate risk assessment processes, resulting in compliance issues that erode trust among stakeholders.

Furthermore, the financial impact of these inaccuracies often manifests in operational inefficiencies, ultimately hindering an organization’s ability to function effectively and maintain its competitive edge in the market.

Best Practices for Maintaining Data Quality

Maintaining high data quality is essential for organizations aiming to make informed decisions and optimize operational efficiency.

Implementing robust data governance frameworks ensures effective data cleansing and quality assurance. Regular validation processes and consistency checks enhance error detection capabilities, minimizing inaccuracies.

Conclusion

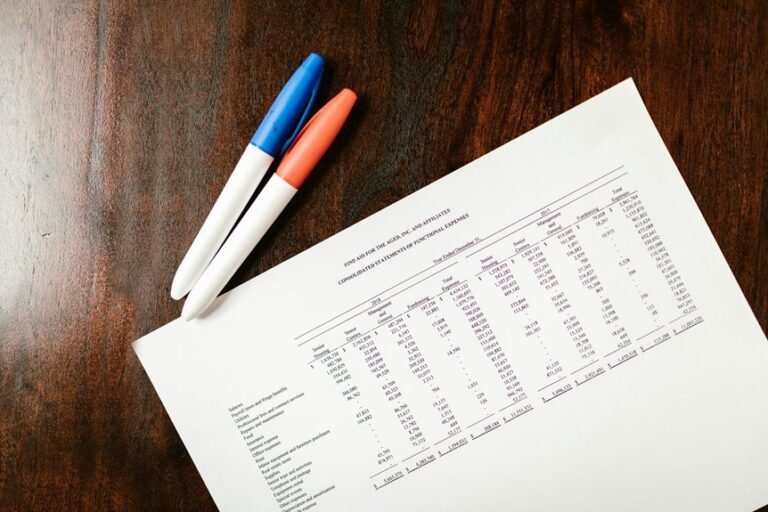

In conclusion, the verification of identifiers such as 797802, 6946290525, 911787612, 693125885, 648971009, and 20784200 is akin to a meticulous audit of a financial ledger, where accuracy is paramount. By employing rigorous validation techniques and adhering to best practices, stakeholders can significantly mitigate the risks associated with data inaccuracies. This diligent approach not only fosters trust but also ensures that informed decision-making is grounded in reliable and validated information, ultimately enhancing data integrity.